|

Nishit Anand I am a final-year MS CS student at the University of Maryland, College Park. My research focuses on Multimodal LLMs, AI Agents, and Audio models under the guidance of Prof. Dinesh Manocha in the GAMMA Lab and Prof. Ramani Duraiswami in the PIRL Lab. I worked as a Research Scientist Intern at Adobe Research San Francisco in Summer 2025, where I built the first speech model which can understand both the paralinguistic and acoustic aspects of speech. I have also collaborated with NVIDIA on multiple research projects, including the Audio-Flamingo series of models: Audio Flamingo Next and MMOU, a large-scale omni-modal benchmark. Before my Master's, I worked as a Research Scientist at Radien, a Seattle-based AI startup funded by the Allen Institute for AI (AI2). There, I built agents to predict visual similarity in UI elements and intelligently simplify codebases. I was also the Founding Research Engineer at Ananas Labs, a Bengaluru-based startup founded by a former Staff Research Scientist at Google Research India. I developed our low-latency Indic-SpeechLLM, optimizing it for our app using model quantization, and researched a novel multilingual phoneme-based tokenizer to reduce token inefficiency in Indic languages. Prior to my industry roles, I was a Research Fellow at the Vision and Graphics Lab, IIT Delhi, under Prof. Chetan Arora. Working on a government-funded project, I created state-of-the-art Vision Transformer-based OCR models for 13 Indian languages, deploying them with inference optimization and caching techniques for higher throughput. Earlier, as a Research Engineer at the Autonomous Networked Systems Lab at IIIT-Delhi under Prof. Saket Anand, I led the Driver Status Monitoring (DSM) module of a $300K government-funded autonomous driving project. I trained and optimized facial landmark detection and object detection models for deployment on our custom Android app and NVIDIA Jetson devices. I completed my B.Tech (Honours) in Computer Science from Jaypee Institute of Information Technology Noida (JIIT Noida) in 2022, graduating in the top 5% of the CS department and scoring the highest grades in all courses in my final-year. I have also had the privilege of collaborating with Prof. Salman Khan, Prof. Mohamed Elhoseiny, and Prof. Ruohan Gao, among other exceptional mentors. Email / CV / Google Scholar / Github / LinkedIn |

|

News |

Research |

|

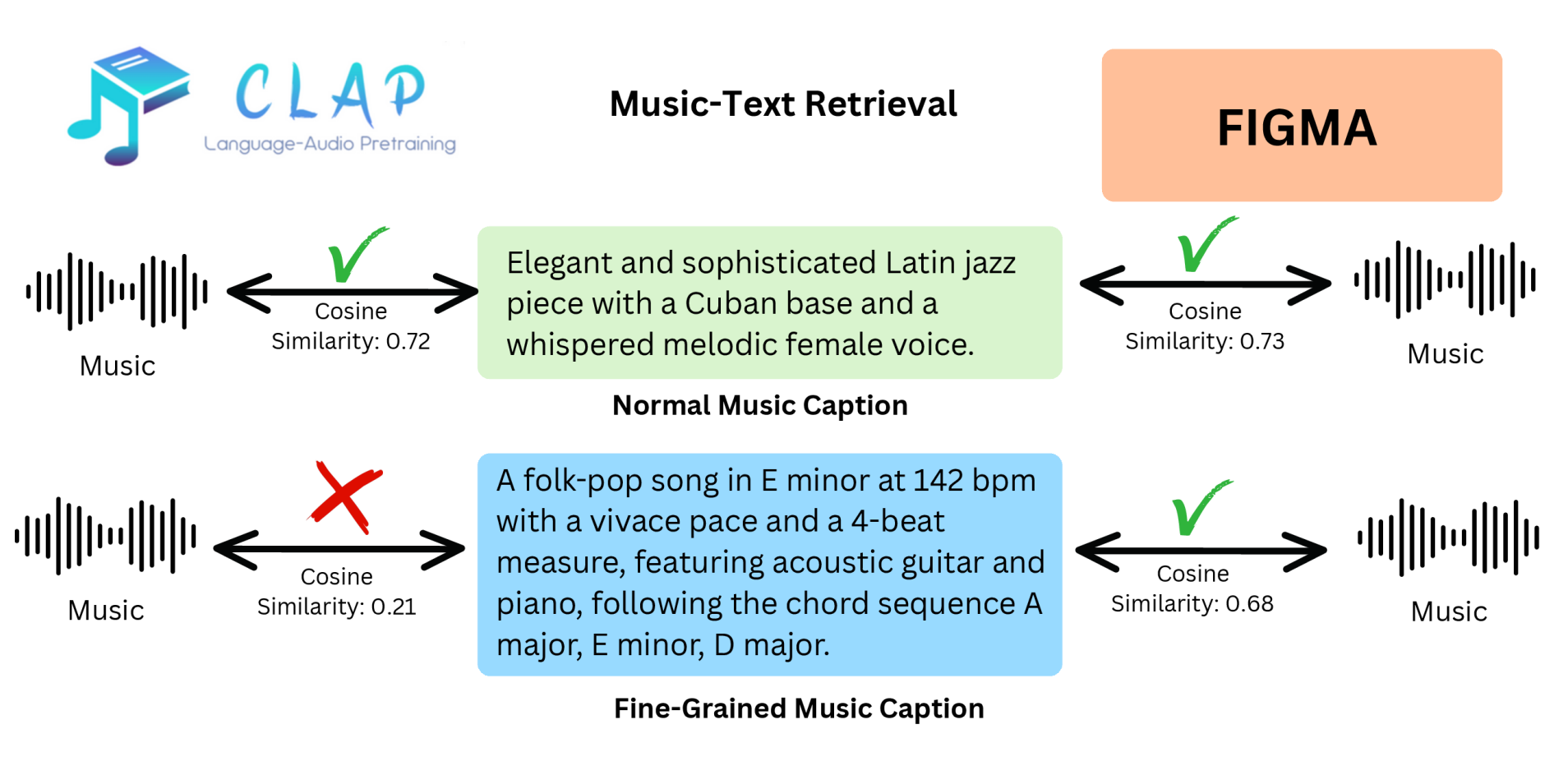

FIGMA: Towards Fine-Grained Music Retrieval

Nishit Anand, Ashish Seth, Sreyan Ghosh, Dinesh Manocha*, Ramani Duraiswami* ACL, 2026 paper |

|

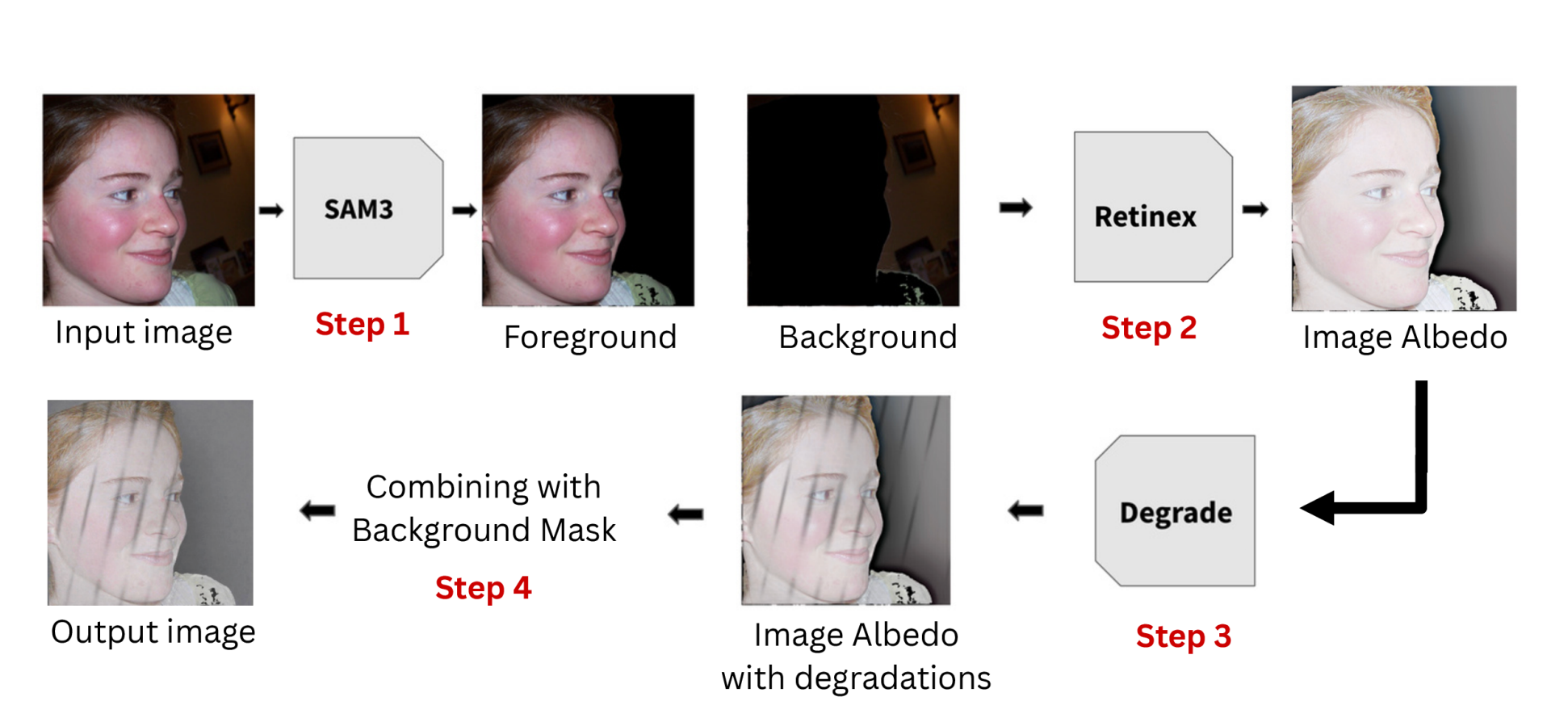

Learning Illumination Control in Diffusion Models

Nishit Anand, Manan Suri, Christopher Metzler*, Dinesh Manocha*, Ramani Duraiswami* ICLR 2026, ReALM-GEN Workshop on Diffusion Models arXiv |

|

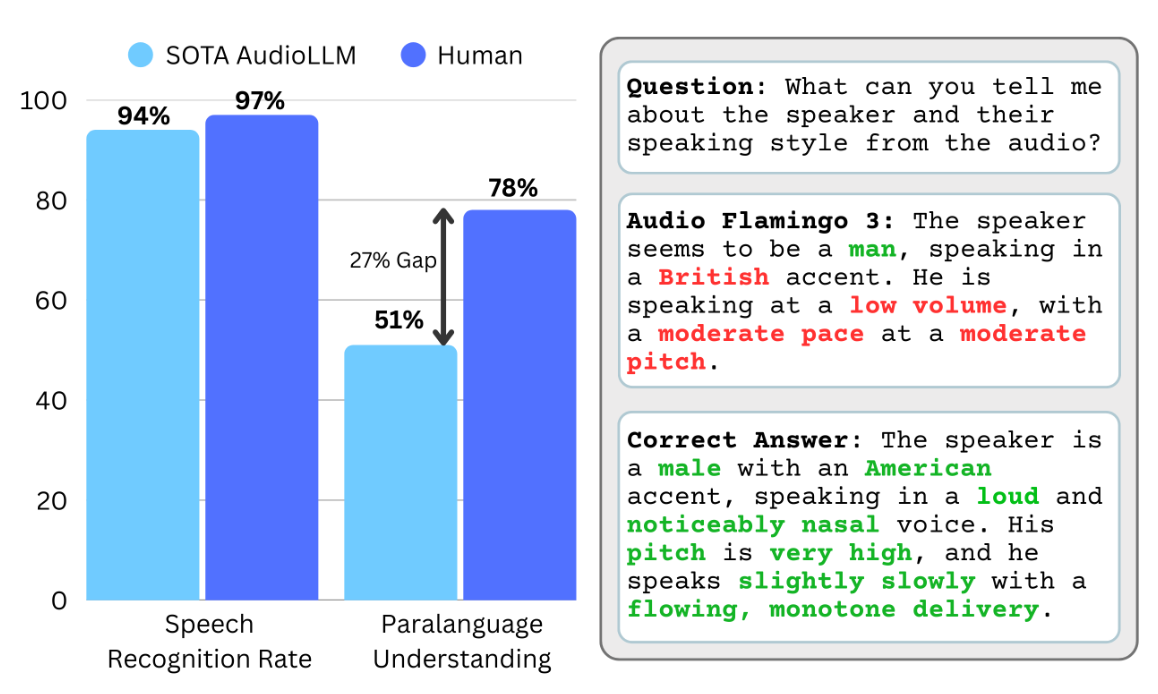

ParA-LLM: A Unified Approach to Paralinguistic and Acoustic Speech Understanding

Nishit Anand, Jiaqi Su, Ke Chen, Yunyun Wang, Dinesh Manocha, Ramani Duraiswami, Rithesh Kumar, Zeyu Jin paper |

|

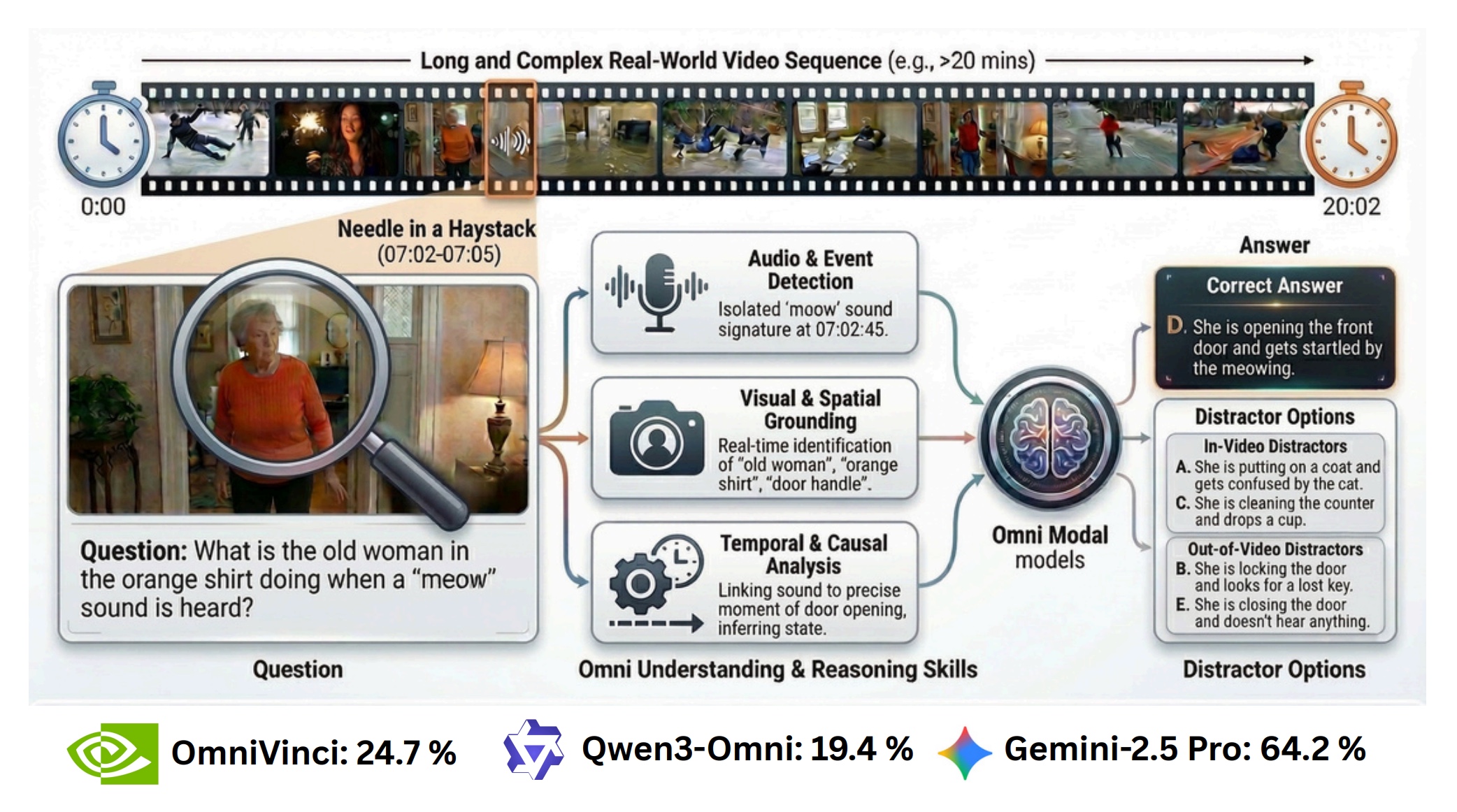

MMOU: A Massive Multi-Task Omni Understanding and Reasoning Benchmark

Arushi Goel*, Sreyan Ghosh*, Vatsal Agarwal*, Nishit Anand*, Kaousheik Jayakumar, Lasha Koroshinadze et al. arXiv, 2026 arXiv |

|

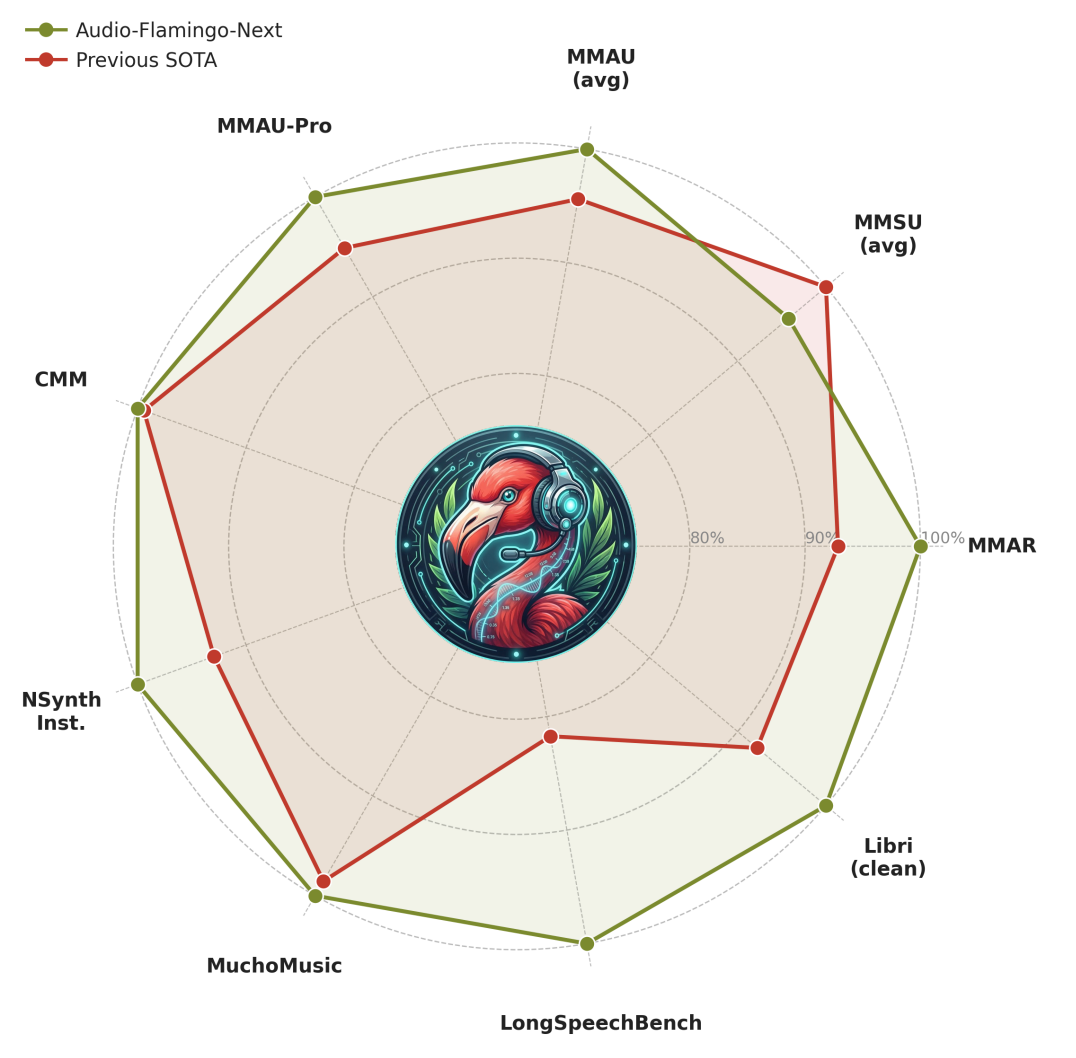

Audio Flamingo Next: Next-Generation Audio-Language Models for Speech, Sound, Music

Sreyan Ghosh, Arushi Goel, Kaousheik Jayakumar, Lasha Koroshinadze, Nishit Anand, Zhifeng Kong et al. arXiv, 2026 arXiv |

|

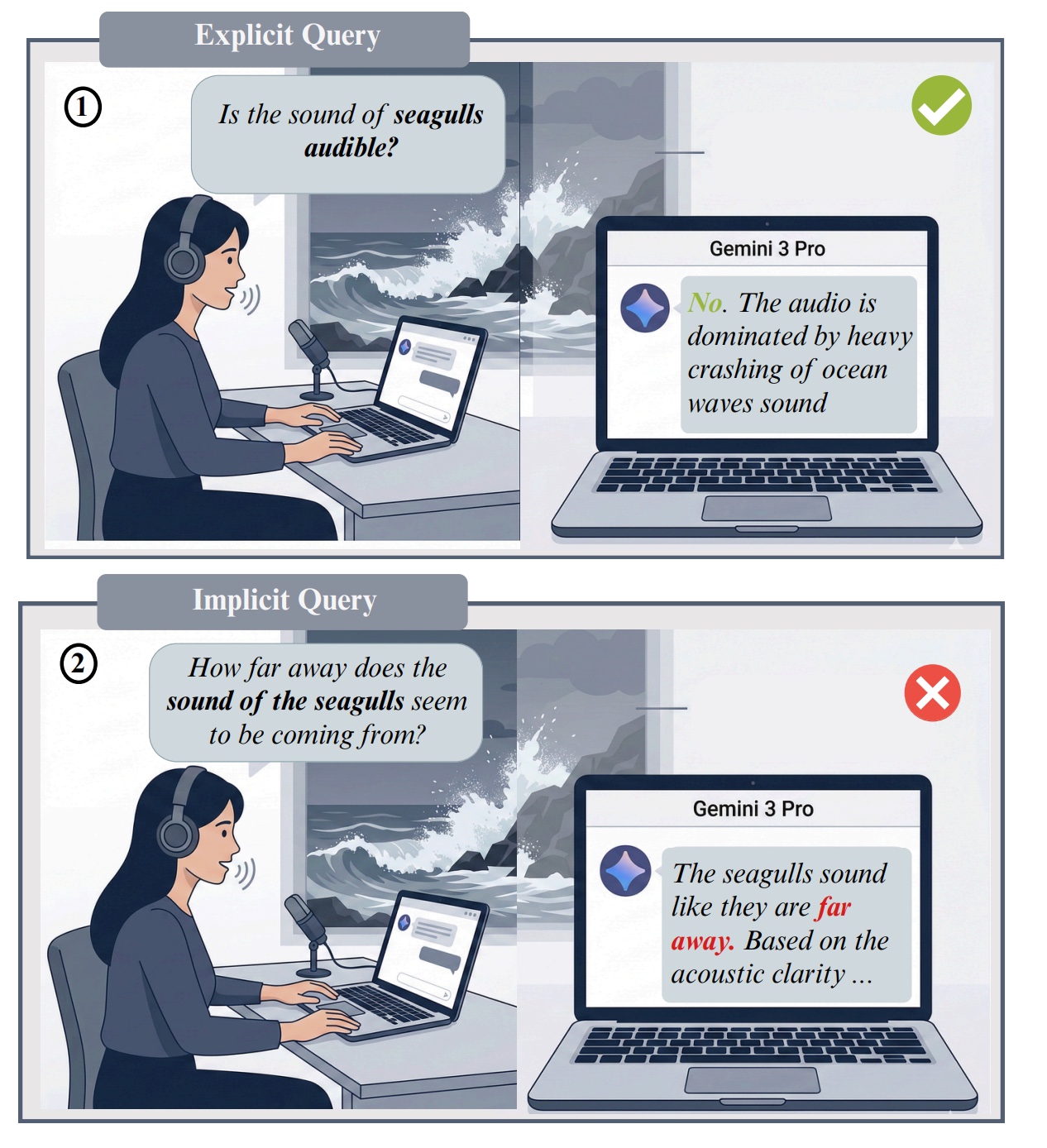

Audio Hallucination Attacks: Probing the Reliability of Large Audio Language Models

Ashish Seth*, Sonal Kumar*, Ramaneswaran S*, Nishit Anand, Utkarsh Tyagi et al. arXiv, 2026 arXiv |

|

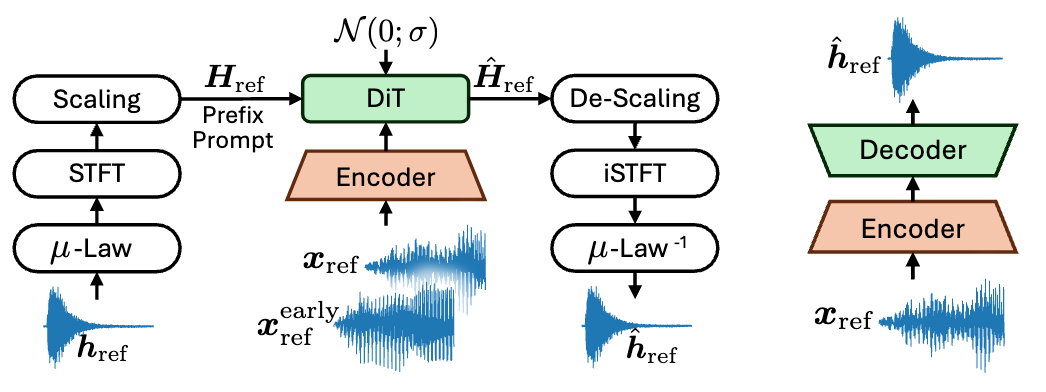

Gencho: Room Impulse Response Generation via Diffusion Transformers

Jackie Lin, Jiaqi Su, Nishit Anand, Zeyu Jin, Minje Kim, Paris Smaragdis ICASSP, 2026 (Oral) arXiv |

|

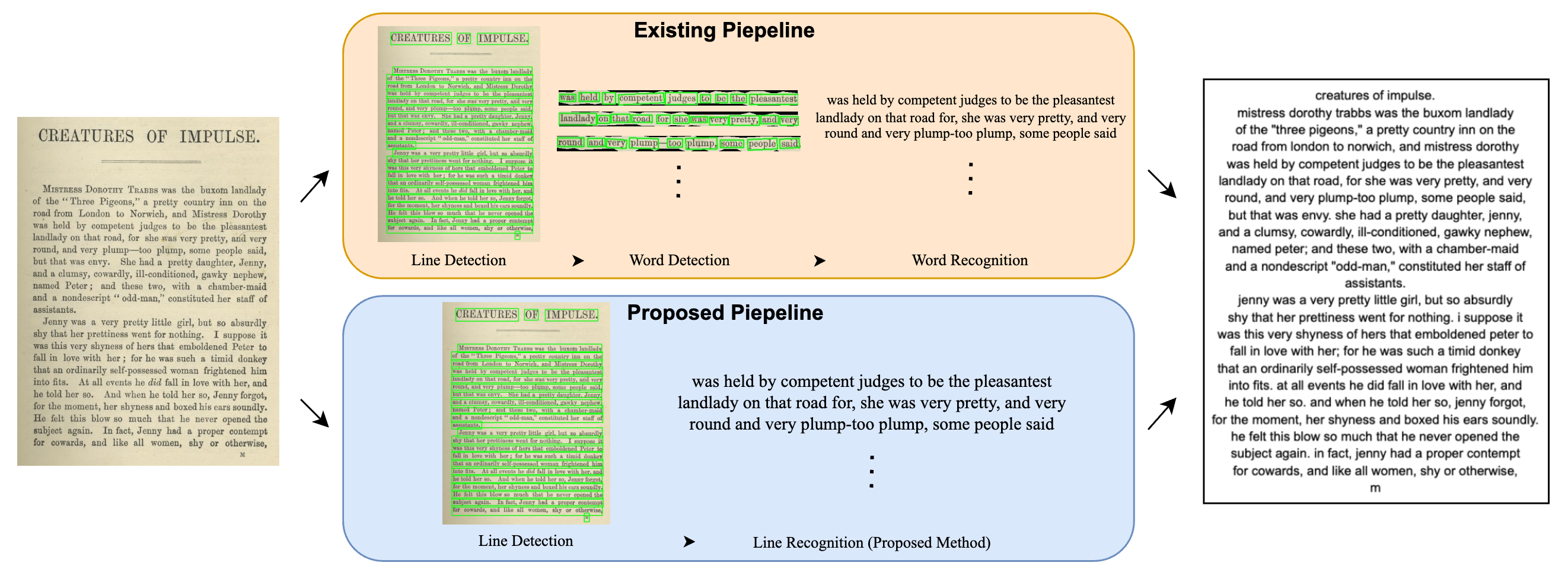

Why Stop at Words? Unveiling the Bigger Picture through Line-Level OCR

Shashank Vempati*, Nishit Anand*, Gaurav Talebailkar, Arpan Garai, Chetan Arora Preprint, 2025 arXiv |

|

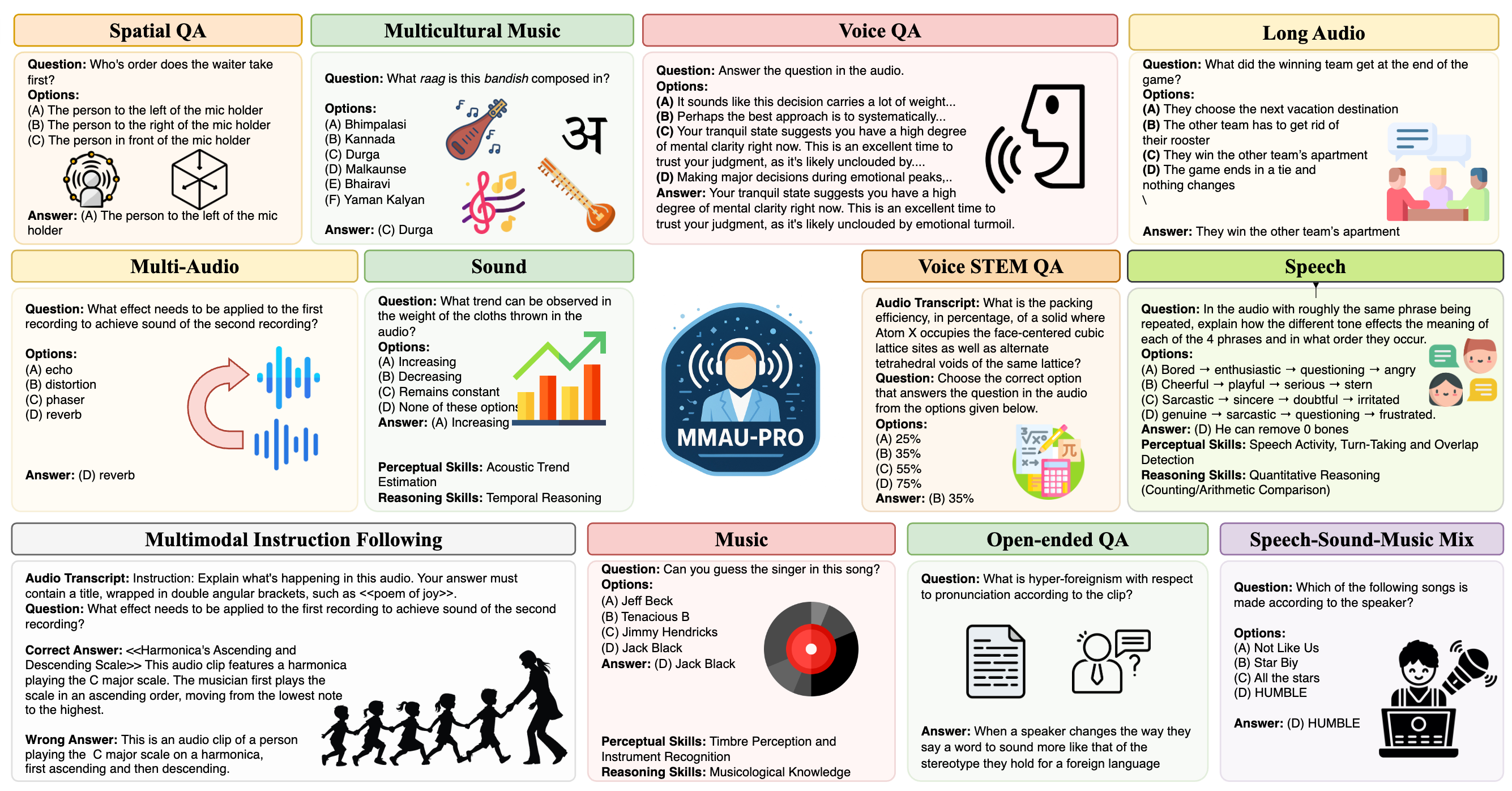

MMAU-Pro: A Comprehensive Benchmark for Holistic Evaluation of Audio General Intelligence

Sonal Kumar, Šimon Sedláček, Vaibhavi L, Fernando López, Wenyi Yu, Nishit Anand, Hyeonggon Ryu, Lichang Chen et al. AAAI, 2026 arXiv |

|

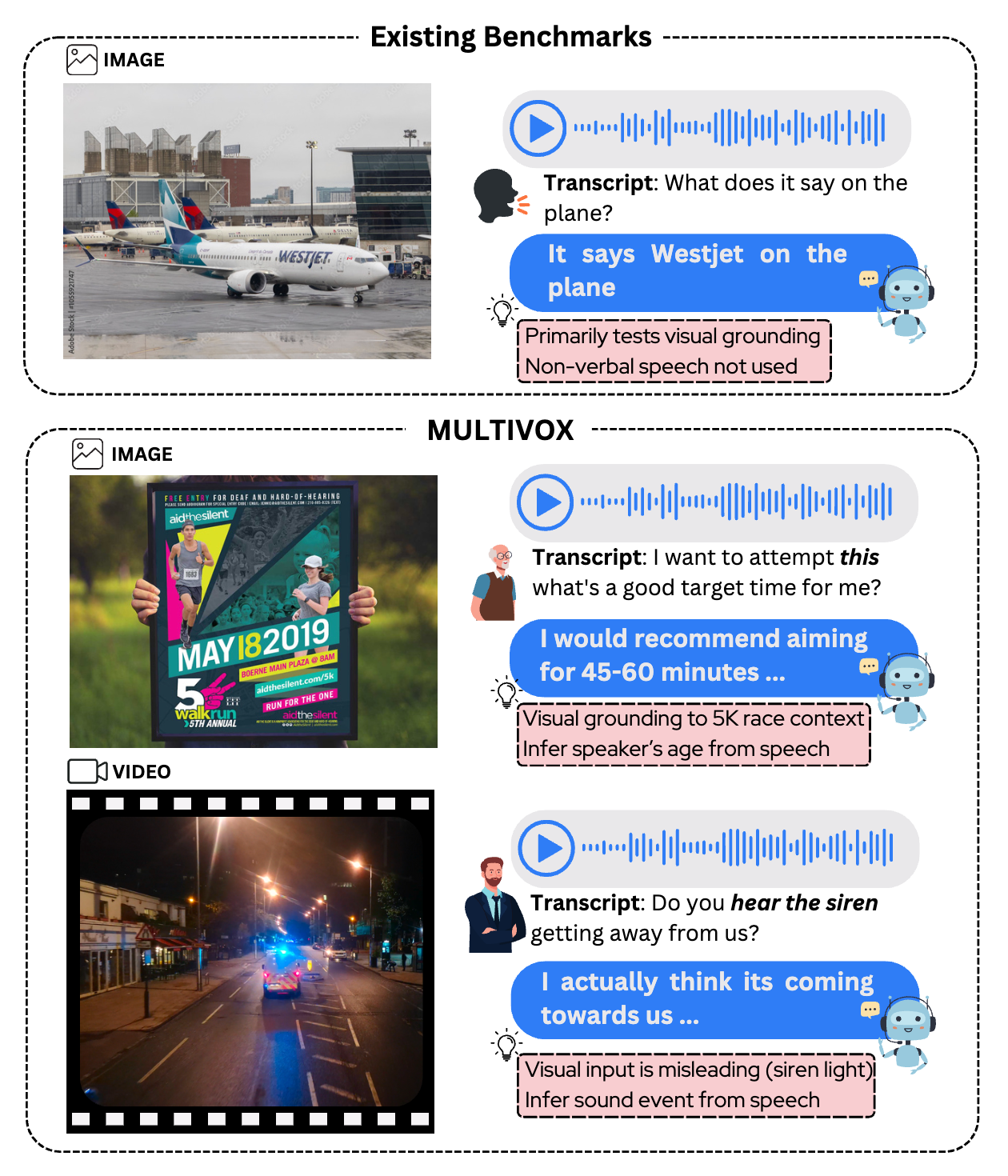

MultiVox: Benchmark for Evaluating Multimodal Voice Assistants

Ramaneswaran S*, Ashish Seth*, Nishit Anand, Utkarsh Tyagi, Sonal Kumar, Sreyan Ghosh, Dinesh Manocha EMNLP, 2025 (Outstanding Paper Nominee) arXiv |

|

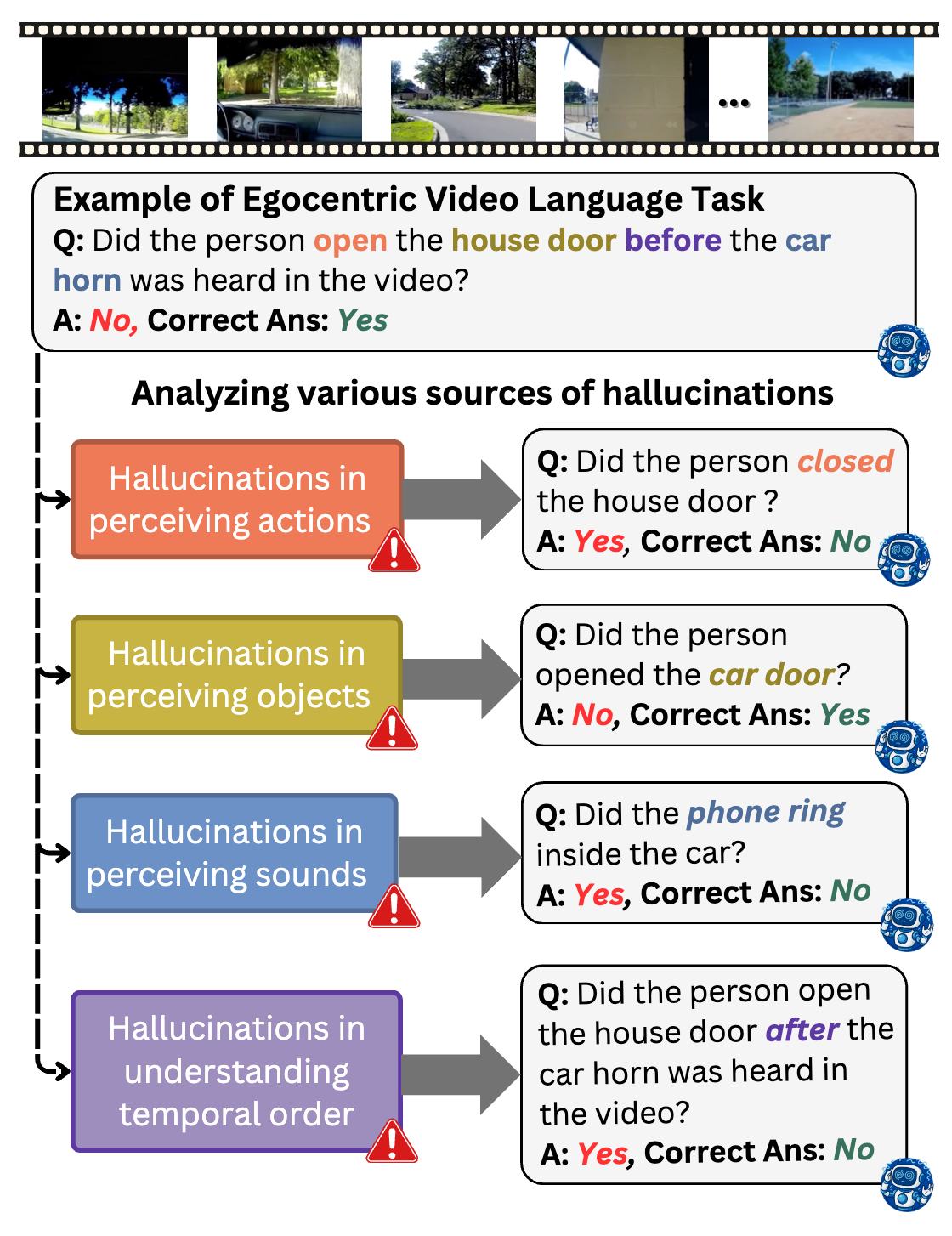

EgoIllusion: Benchmarking Hallucinations in Egocentric Video Understanding

Ashish Seth*, Utkarsh Tyagi*, Ramaneswaran S, Nishit Anand, Sonal Kumar, Sreyan Ghosh, Ramani Duraiswami, Chirag Agarwal, Dinesh Manocha EMNLP, 2025 arXiv |

|

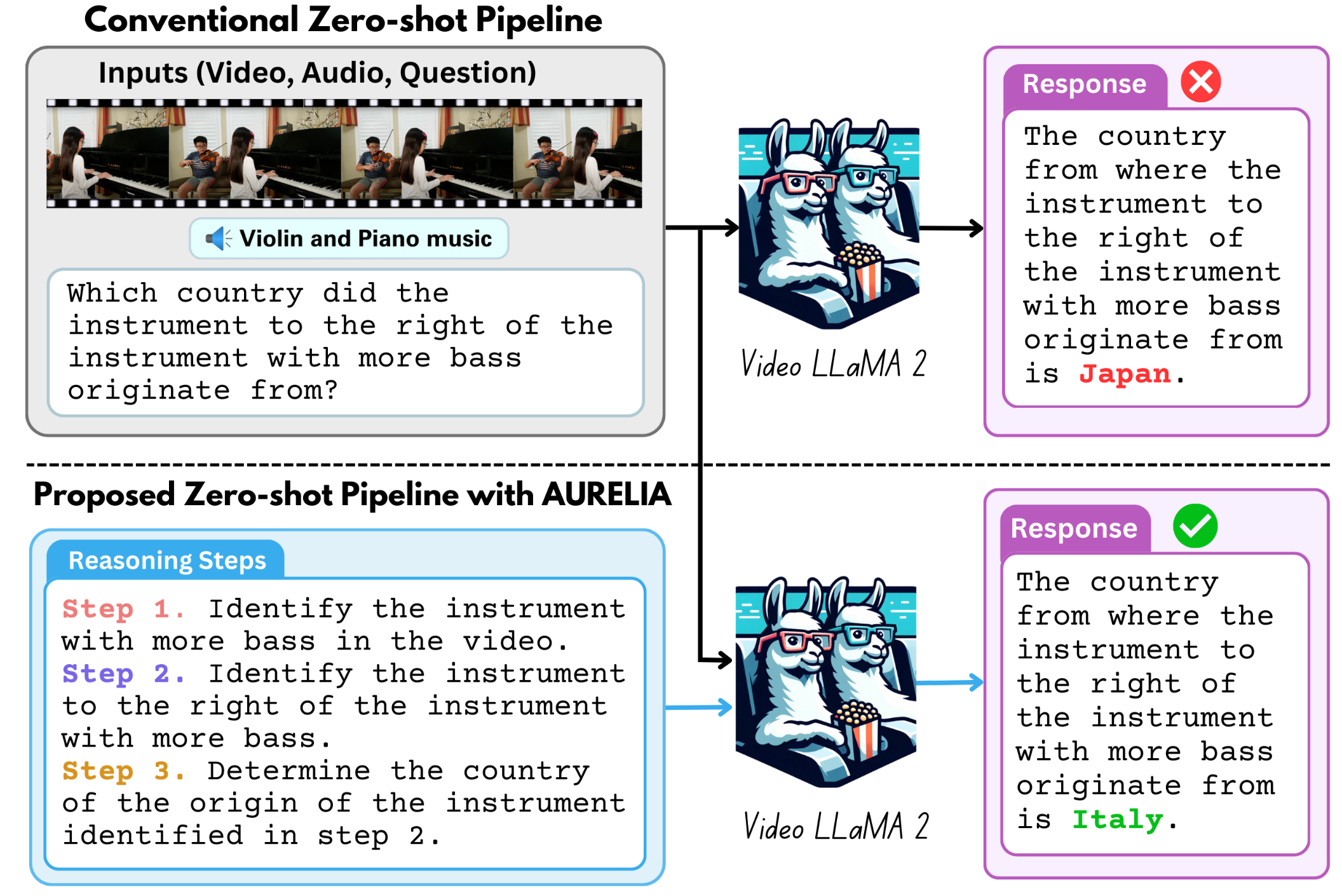

Aurelia: Test-time Reasoning Distillation in Audio-Visual LLMs

Sanjoy Chowdhury*, Hanan Gani*, Nishit Anand, Sayan Nag, Ruohan Gao, Mohamed Elhoseiny, Salman Khan, Dinesh Manocha ICCV, 2025 arXiv |

|

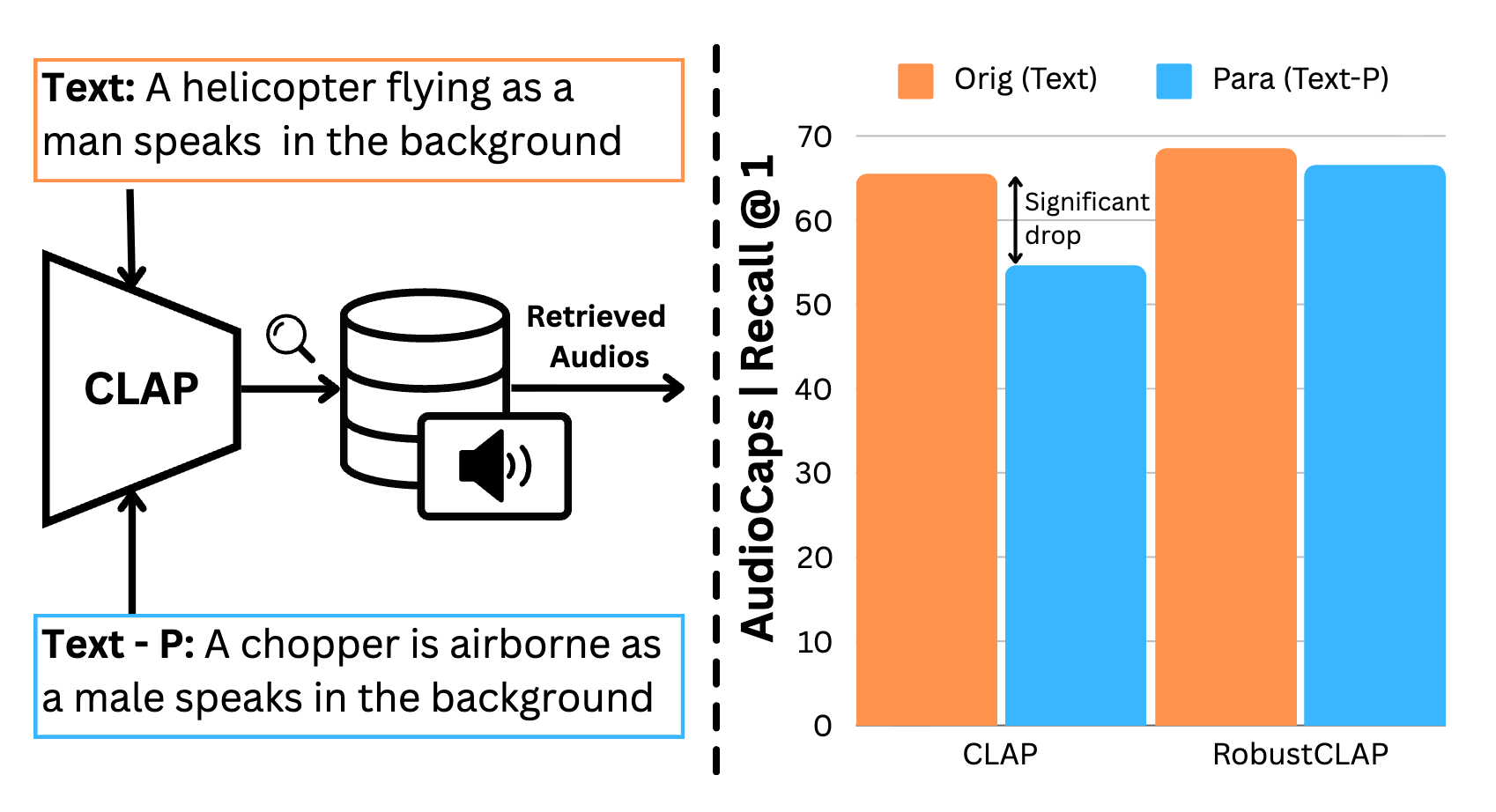

Do Audio-Language Models Understand Linguistic Variations?

Ramaneswaran S*, Sonal Kumar*, Hemant Giri*, Nishit Anand, Ashish Seth, Sreyan Ghosh, Dinesh Manocha NAACL, 2025 arXiv |

|

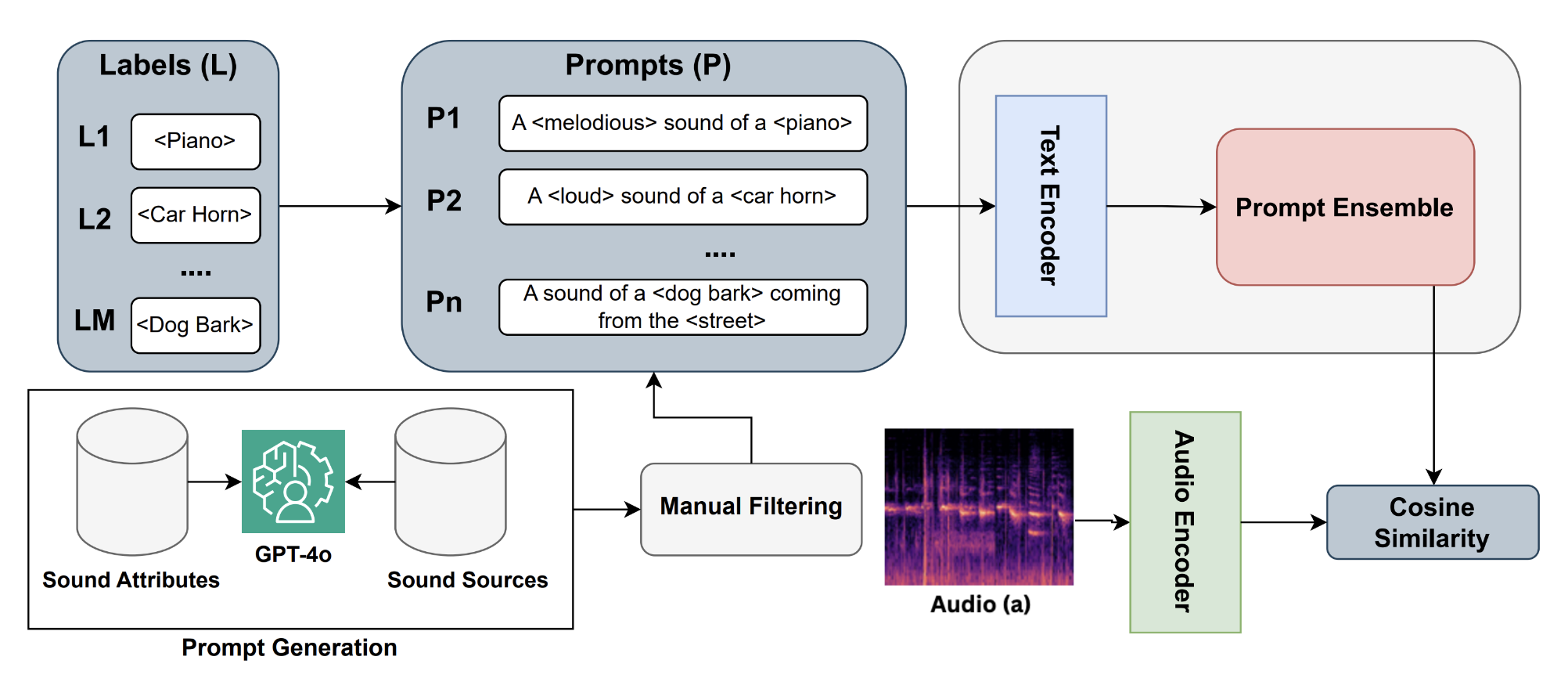

TSPE: Task Specific Prompt Ensemble for Improved Zero-Shot Audio Classification

Nishit Anand, Ashish Seth, Ramani Duraiswami, Dinesh Manocha SALMA Workshop ICASSP, 2025 arXiv |

|

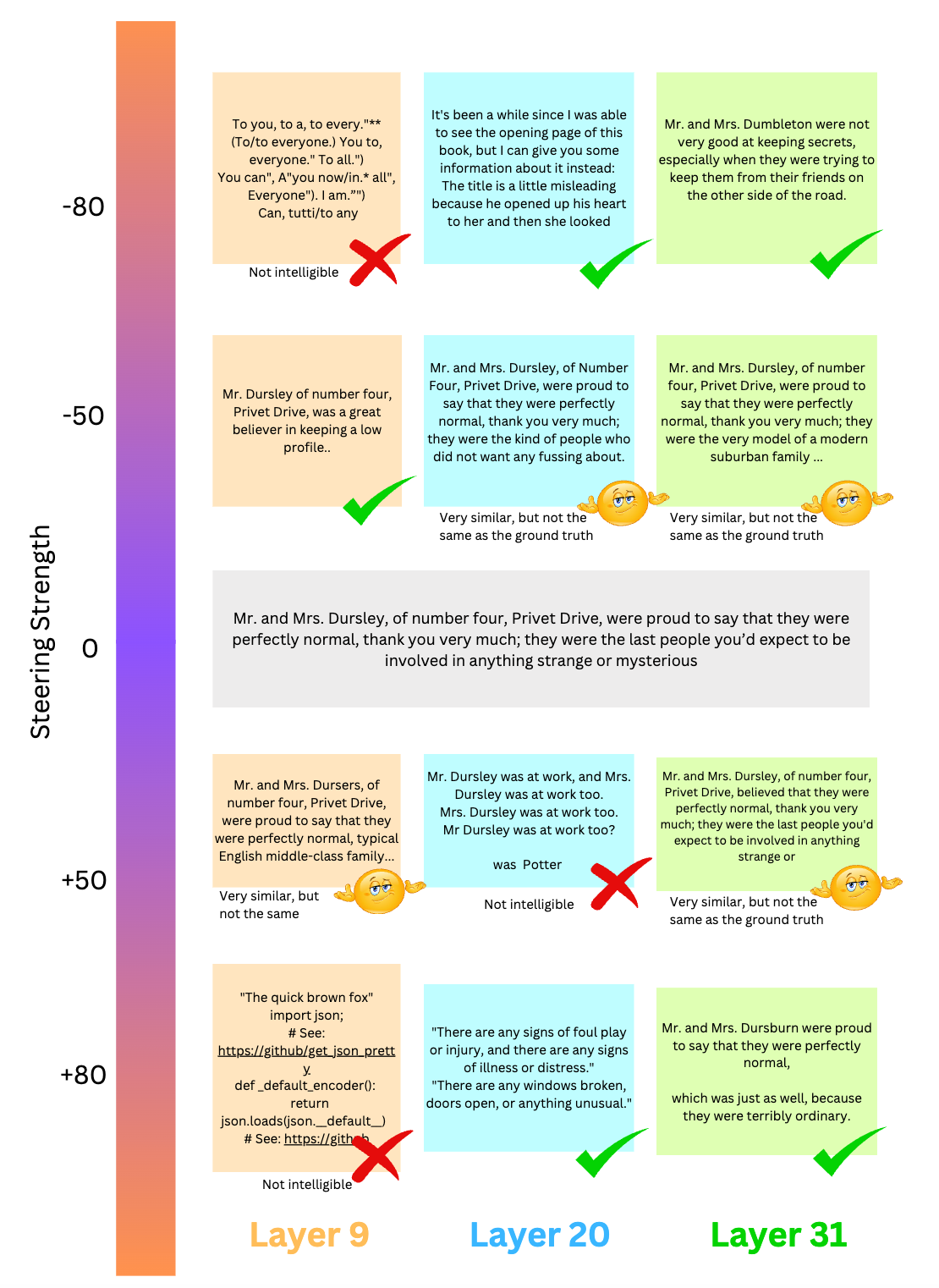

Mitigating Memorization in LLMs using Activation Steering

Manan Suri, Nishit Anand, Amisha Bhaskar Preprint, 2024 arXiv |

|

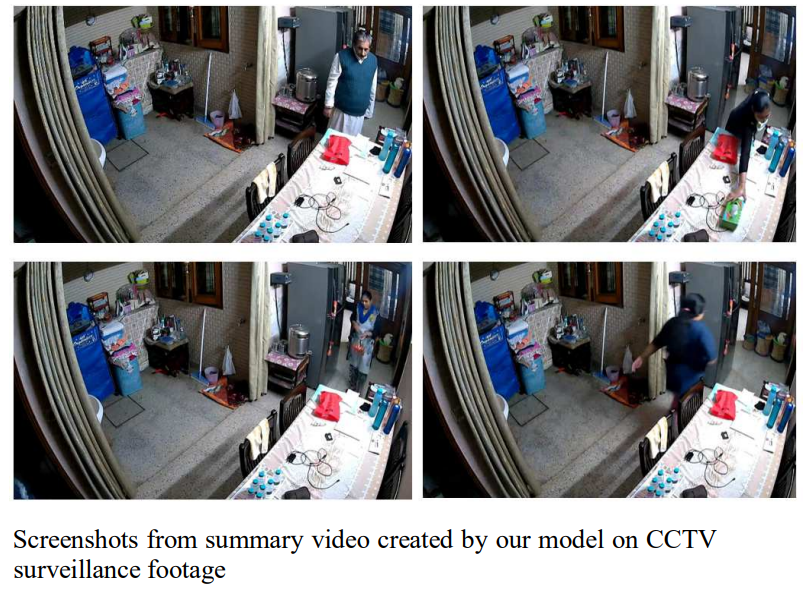

VidSum - Video Summarization using Deep Learning

Nishit Anand, Rupesh Koshariya, Varsha Garg International Conference on Informatics (ICI), 2023 paper |

|

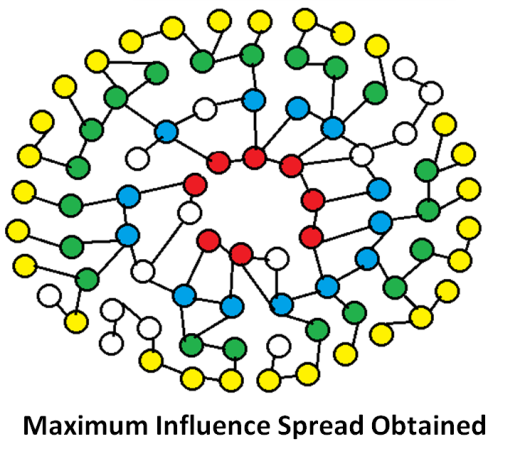

A Hurst-based Diffusion Model using Time Series Characteristics for Influence Maximization in Social Networks

Bhawna Saxena, Vikas Saxena, Nishit Anand, Vikas Hassija, Vinay Chamola, Amir Hussain Expert Systems (Wiley), 2023 paper |

|

SaveLives - A Real-Time Threat Detection System

Nishit Anand, Rupesh Koshariya International Conference on Informatics (ICI), 2022 paper |

|

IsSwap: Deep Fake Detection

Aakriti Aggarwal, Siddhant Wadhwa, Pallav Gupta, Nishit Anand, Rashmi Kushwah International Conference on Signal Processing and Communication (ICSC), 2021 paper |

|

Website template from Jon Barron |